In a previous article about low-code in enterprise solutions we turned to business. However, on Habr, most of the users are engineers (Cap!) , and in the comments to the article, I saw a reasonable number of typical objections to LCDP (low-code development platforms). And while those who don't know about the Dunning-Kruger effect are already looking for the dislike button, let's look at the most common misconceptions and thoughts.

In our opinion, the most common misconceptions are as follows.

- Some people think that low-code is about using ready-made products (not a development philosophy).

- Low-code is understood as developed code-first platforms. Some colleagues even cited WordPress as an example.

- Low-code lacks normal DevOps (code review, versioning, deploying, etc.), normal code reuse, and other abstractions. Anyway, low-code is for some standard solutions (for which no-code is intended).

- Developers are better off writing code with ready-made value rather than developing designers.

- “You don't have to understand Low-code; it's some kind of artifact. We'll continue to code as usual.” However, some developers still don't understand everything about DevOps and think that this is a position. So the situation with low-code is not unique.

Why did we decide to raise the topic of low-code and the prospects for the development of the IT industry? I am a physicist and entrepreneur by training. In the mid-90s, he owned an ISP (Internet Service Provider), after that he ranged from an engineer at Beeline to a managing partner for a company specializing in automation software development (my current position is 7 years). And now it's interesting to think about what will happen tomorrow.”

Briefly about the state of the industry

The level of code abstraction is increasing. Starting with machine instructions, moving into procedural programming and abandoning memory management, with the increase in the number of frameworks and the development of high-level languages, what will happen tomorrow? Will the level of development abstraction increase even more, and if so, how?

At the same time, the need for new products and automation is growing. The shortage of specialists in the industry can now be easily traced to the example of salary growth: it is faster than the growth in labor productivity in the IT sector.

In addition, the longer one development team stays on the project, the deeper it dives into the operational support of the project: more features generate more necessary edits.

Development costs are increasing, and at the same time there is a conflict of interest: businesses need to change faster, and developers want interesting tasks. But most of the changes in business are not interesting.

The IT industry has always responded to such challenges by increasing the level of abstraction and simplifying development. The “assembler era” was replaced by the “C++ era”, then came the era of high-level languages with a complete lack of memory and resource management, and then the number of frameworks and libraries only increased.

There are no prerequisites for changing this trend. Let's see if low-code can continue it.

Is Low-code not a philosophy?

In Runet, each low-code article ends with comments about the imperfection of a particular product (s). We'll talk about products separately below, but first, I suggest thinking about the low-code concept as a whole.

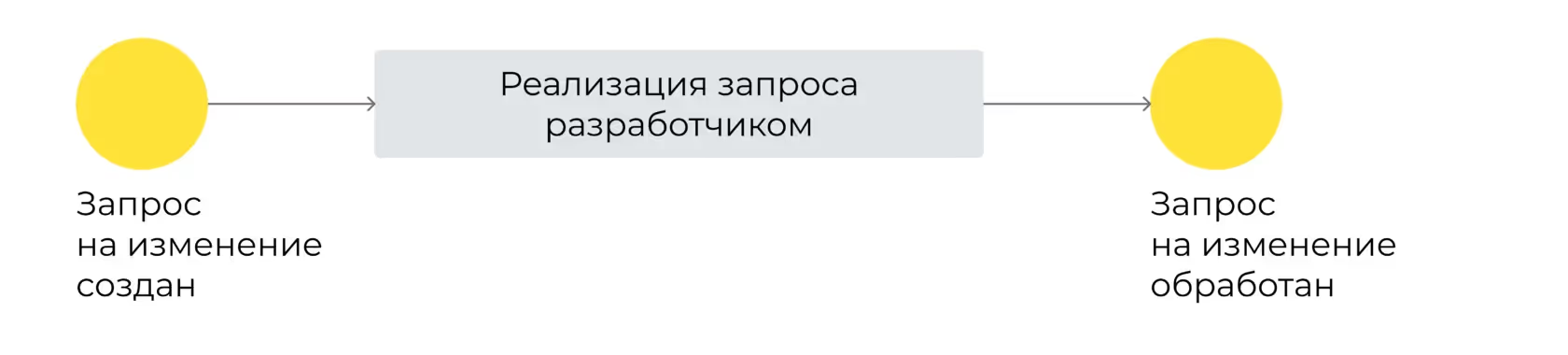

Code-first philosophy

Developers deliver the ultimate value to the customer. The layout of elements, new fields in entities, calculation logic and integration flows — all this is implemented through program code. Yes, it has some settings that sometimes allow you to make changes without involving developers. But most of the change requests are still answered by developers.

Developers may have two wishes here.

- How can we write only interesting code and transfer boring operational tasks (“move the button”) to someone else? For operational moments, let people come to us only in rare cases, the number of which should ideally continue to decrease.

- If we are asked for operational changes, let them be more specific. Communication should take less time. And I would like it to be easier to understand my own code, which has probably been left untouched for six months.

It is difficult to realize these wishes in code-first.

Low-code philosophy

Imagine that you are not delivering the final value directly to the client, but a designer to realize this value. No, of course, you will have to implement the primary functionality in your constructor for debugging purposes. But at the same time, you'll be able to create calls, functions, and components that...

- They are self-documenting in the vast majority of cases.

- Each component has settings that make it reusable.

It is important to note here that code-first platforms also have a lot of settings, and they are even divided into components. However, in practice, their use does not make it possible to remove most of the operational work from the developer.

- This and other components can be used to collect fundamentally new business value. Technically, these are all the same interfaces, components of business processes and integrations, but for businesses these are fundamentally different functionality.

- If you need some very exclusive logic (one of the components is missing) and this logic obviously won't be reused, you can insert the component you need by writing a small piece of code. Unlike code-first systems, you don't need to write the entire module or microservice here — you will paste the code into the required fragment without a service binding (“sugar”). Such code is often easy to read not only by a developer, but also by an experienced manager or business analyst and consists of 2—20 lines.

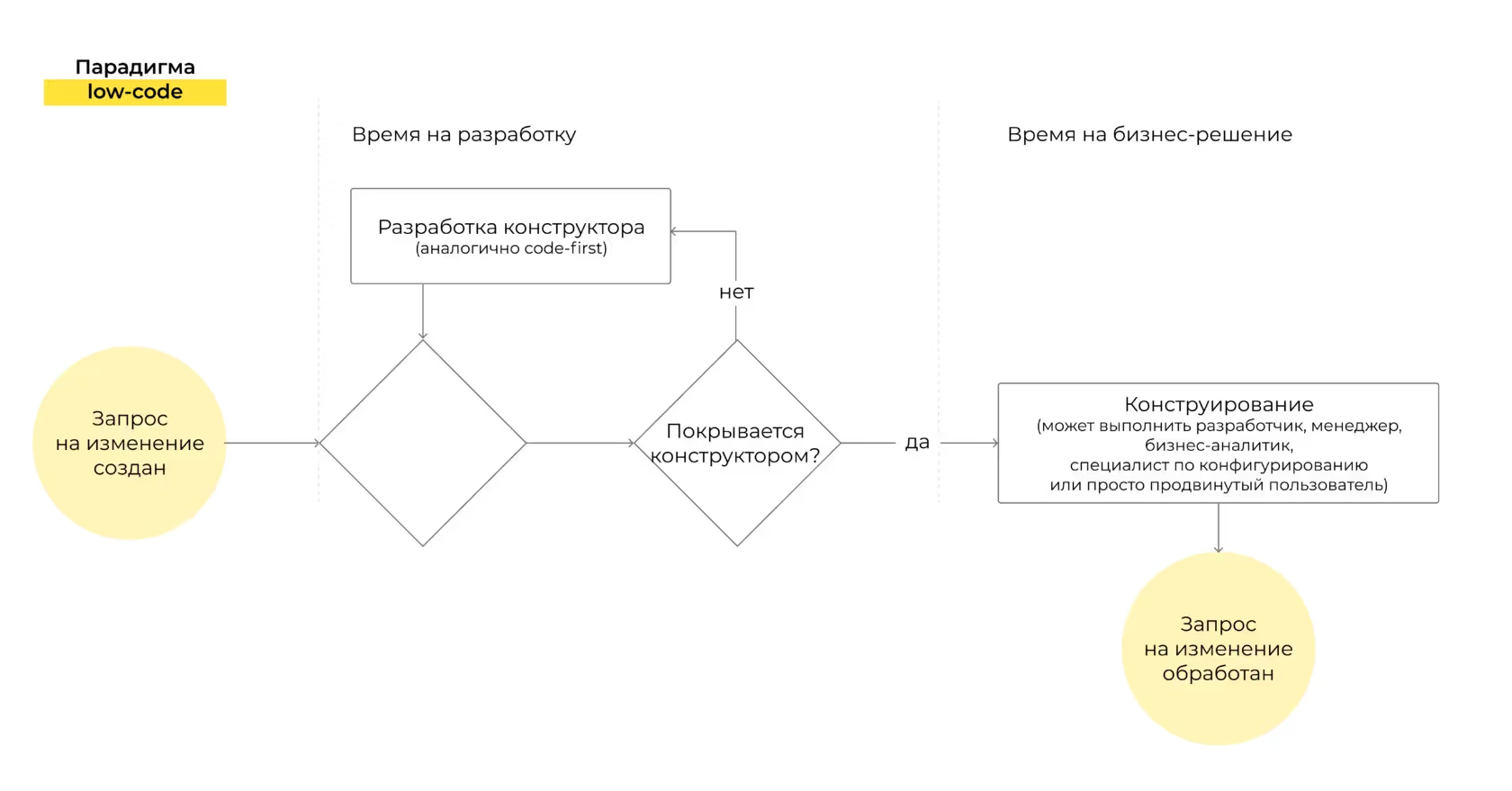

When faced with a new requirement, you either collect new functionality in an already created constructor, or add activities and components to it if they are not available.

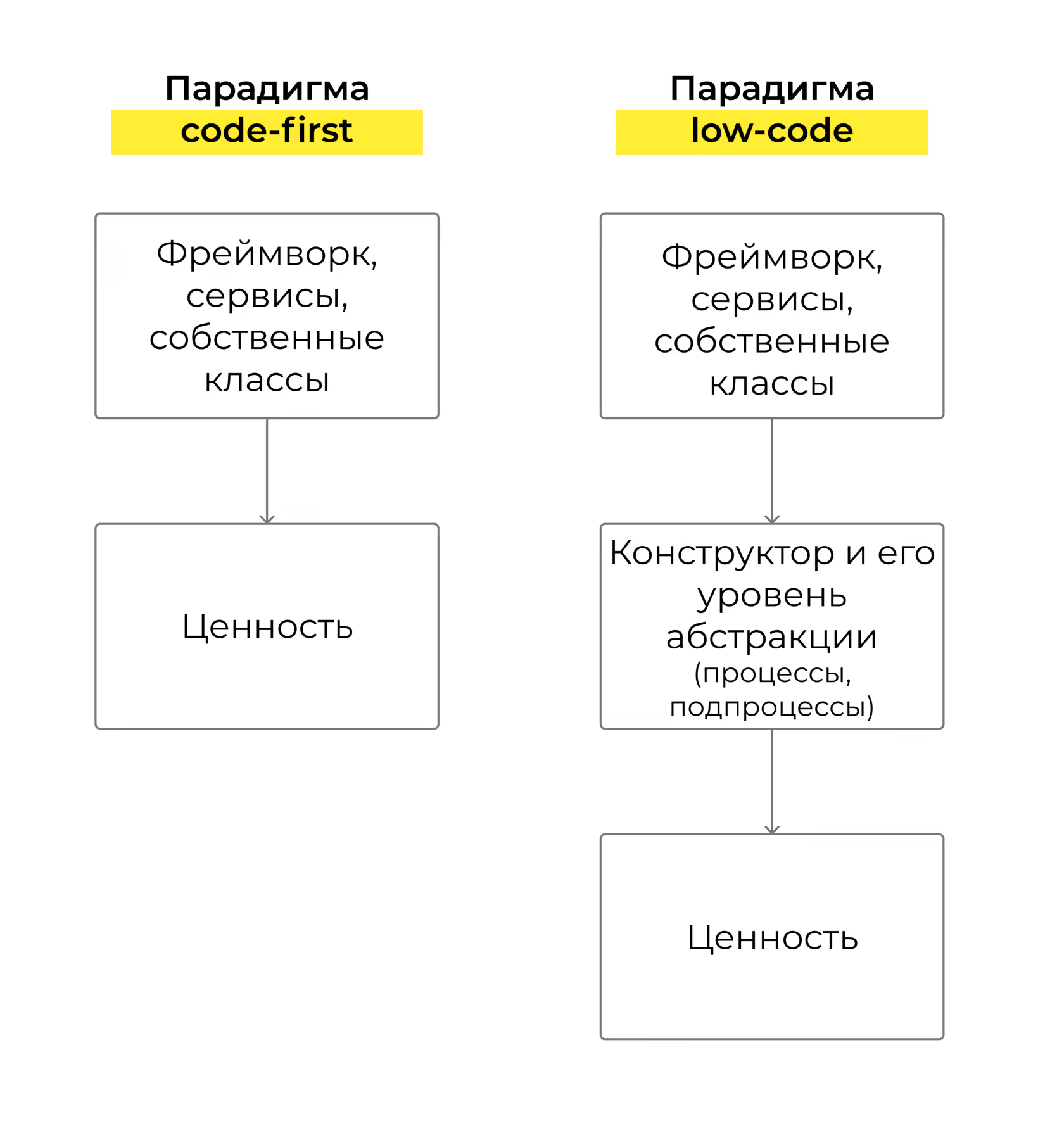

If you look at low-code development through the eyes of a code-first developer, the first thing that changes is the level of component abstraction. In addition to the usual services, libraries, and designers, there is another, higher level of abstraction — the abstraction of business logic.

This is true both when using ready-made LCDP and when developing in the low-code paradigm.

Low-code is confused with developed code-first platforms

In discussions, I come across the fact that low-code simply means very flexible systems. For example, Bitrix — because “it also has business processes and table modeling” — or WordPress.

LCDP is a platform where everything is built on a constructor-based basis, because if this is not the case, sooner or later, maintaining such a platform will become code-first.

I would outline the following LCDP criteria.

- The system kernel contains only constructor elements and everything related to it, so you don't have to use a code-first approach to implement what LCDP is designed for.

- In the graphics editor, you can paste the code where you need it. And on some platforms, you can also slightly redefine the code of the current component.

Here are some of our favorite examples.

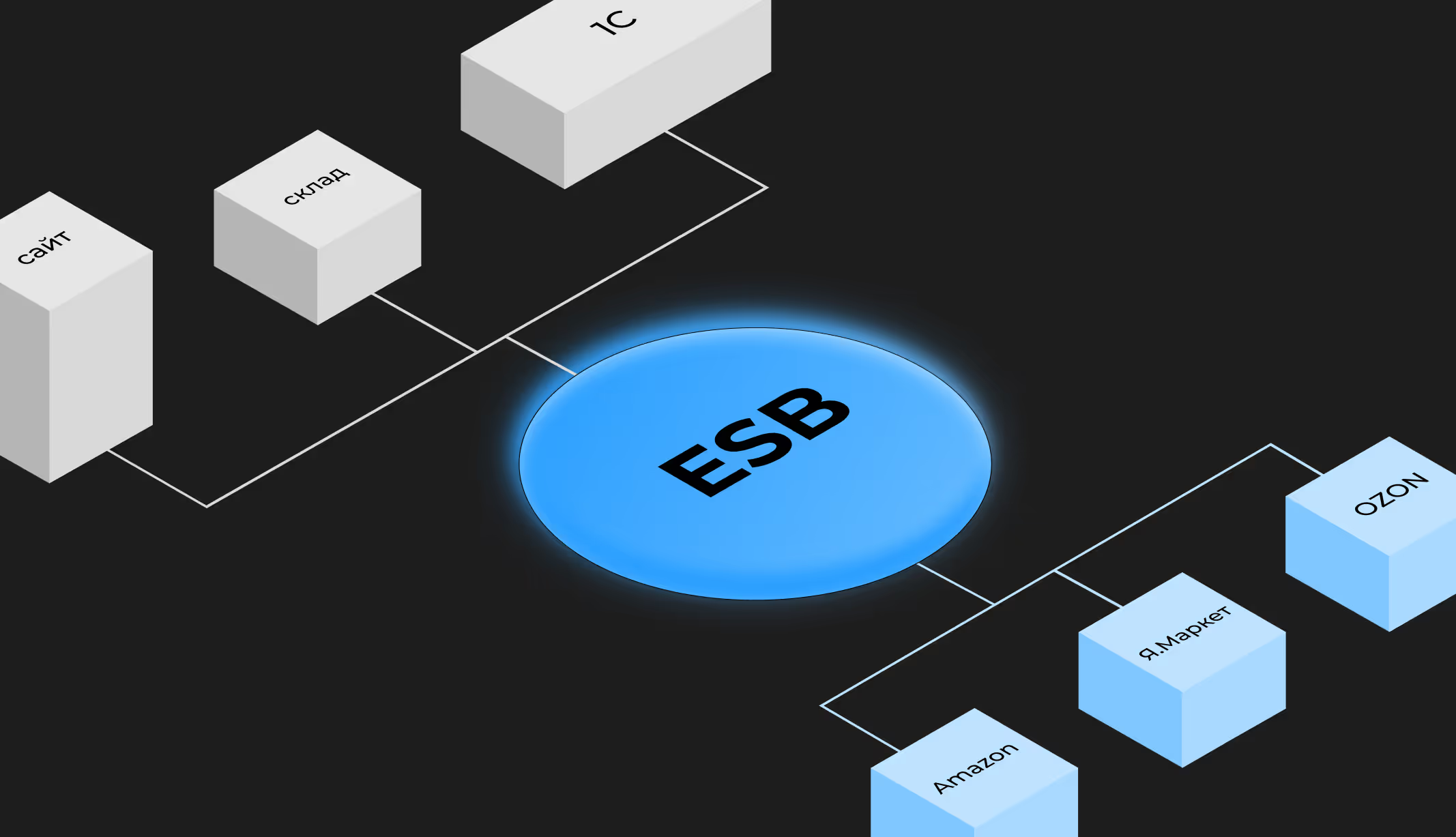

- ETL/ESB Talend, WSO2 — low-code mechanisms for building integrations.

- Mendix, Pega, Appian, OutSystems, Caspio — as platforms for creating applications of various classes.

- Reify, Builder.io, Bildr — for the front.

- Among the newcomers to 2021 are Corteza (fully open-source, Go + Vue.js), Amazon Honeycode.

- For gamers, how long have you been looking at Unity and its products? Have you seen Construct?

- The Russian ones are ELMA BPM, Creatio (developed by Terrasoft) and Comindware, the universal CUBA Platform, Jmix.

- Products from particularly large vendors include Microsoft Power Apps, Oracle APEX, Salesforce Platform, IBM BAS, SAP BTP.

- Partly open-source — Builder.io (front), Bonita, Joget.

There are also borderline cases. For example, Pimcore, which is essentially low-code when it comes to workflow, modeling information models with calculated fields (with some caveats, but that's not the topic of this article). If you try to do something beyond that, you'll fall into the traditional maintenance of a monolith.

Or Bitrix. It is convenient to model data and build business processes that, if desired, package PHP code in low-code style (i.e. without lengthy initializations). However, a huge amount of out-of-the-box functionality and the lack of advanced low-code tools in other parts lead to traditional code-first.

In short, if you hear that “we tried the low-code platform and failed”, I suggest looking into the details. Maybe:

- it wasn't LCDP;

- it was a really bad LCDP (as many as you like);

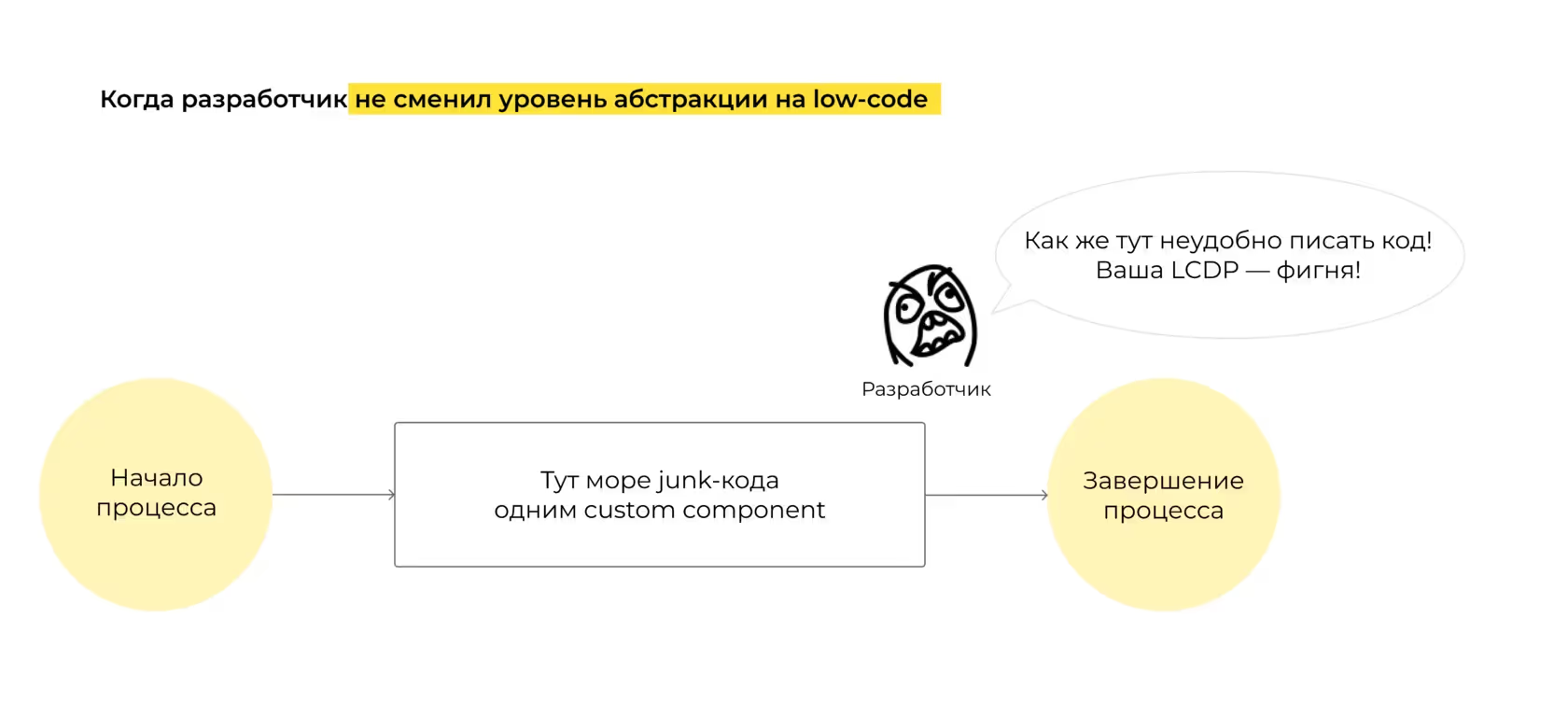

- the team tried to write more code in LCDP than needed. Instead of being reused, there's one custom component with a huge footcloth of code. We've seen this before!

Low-code does not contain traditional controls familiar to developers (code review, deploy)

This is a myth. You'll find these components on the vast majority of platforms. Many (Mendix, Pega) have their own CI with low-code elements.

Although, of course, it is not always easy to conduct a review. If everything is clear with the component code — everything is the same as in traditional code-first — then about what can be done in the constructor...

Imagine you developed a walking shooter with Unity, changed walls, added mountains, and then saved it all and sent it all for review. It is clear that you will not version the change in every wall, and you will have huge versions of the changes, which, in principle, will be quite difficult to understand. Especially when it comes to visual changes and code insertions. This complicates the review process to the extent that the number of changes per commit increases.

Reusability is also usually OK: you can use processes as subprocesses, you can call others from one “job”, etc. If you want to do it well, there will be no problem with overuse.

Developers are better off writing code with ready-made value rather than developing designers

Developers are usually squeamish about the very idea that they can click something with the mouse. Meanwhile, the vast majority of a developer's tasks are not rocket science, but routine. The one that is not interesting to study and that you want to get rid of as soon as possible.

As part of a project, you are limited in choosing tasks. It's unlikely that you'll get extremely interesting and creative people (this means that someone else will do boring tasks, and then all of the above applies to this poor person as well). It is almost impossible not to deal with small, and sometimes similar, boring questions at all. Just because someone has to do them.

How will the situation change under LCDP? Boring tasks are here to stay and will arise with the same frequency, but instead of spending a lot of hours on them, you can close them many times faster or even transfer them to a non-developer. Why write another integration between systems when you can do it faster through ETL solutions? Why fill the sprint with creating a new screen when a designer can do it?

The faster you complete boring tasks, the more time you have for more interesting ones. Moreover, you will be statistically more likely to receive interesting tasks, because the “break” from routine will be reduced significantly.

What about really talented developers, those who do any of the most difficult tasks elegantly and quickly?

After submitting the task in code form and receiving a change request six months later, they are faced with the following:

- we must remember how it is written here;

- we should refactor it — the code quickly becomes obsolete.

But a very rare team signs up for refactoring. And a rare business user will understand why a minor edit was rated within a few days.

What's the result? We wanted to write interesting code, but after a while we don't write anything interesting. We are left with an operating system and remorse for the fact that everything is not very beautiful here anymore.

If you think in terms of LCDP, it's not just about solving routine tasks more quickly. Refactoring does not take place at the level of finite values, but at a higher level of abstraction — at the level of implementation of the constructor component. As a result, you have to think more and design more. Fewer areas are left without your supervision, and it is much more difficult to make a poorly supported task in LCDP, since at least non-developers can detect bad naming or bad logic. The approach itself makes you think more in abstractions.

A lyrical digression. Many team leads solve this problem specifically for themselves as follows: other team members are engaged in the implementation, and they think. This does not lead to anything good. Such team leads often start to miss the code, and the team as a whole becomes more fragile. The antifragility of such people is ensured by the fragility of other team members who act as typists. Both traditional and low-code developers can be affected by this defect.

You don't have to understand Low-code; it's some kind of artifact. We'd better continue coding as usual

You don't have to think about the future of development, assuming that:

- the number of tasks for developers will grow no slower than the number of developers themselves and development substitutes (taking into account increased labor productivity);

- the business will be able to pay for growing development appetites without going broke (i.e. ROI in IT will always be positive);

- other types of investments will not compete with IT investments.

Let's do a thought experiment and figure out how likely this is in real life.

The number of tasks for developers will grow no slower than the number of developers themselves and development substitutes

Currently, the global shortage of developers is about 10%. I can't predict the future, but I can imagine what conditions are needed to keep this deficit forever.

First, developer productivity should not improve enough to compensate for the gap gap (i.e., by 10% relative to current performance).

Second, the number of developers should not grow faster than the number of tasks. An additional condition is that the number of development substitutes should not reduce this deficit.

And this contradicts the fundamental law of supply and demand, because demand is always balanced by supply to an equilibrium value.

What do we see now?

- A huge number of people are either thinking about retraining in IT or are already in the process of retraining. According to surveys, every fifth developer does not have a specialized education and came to IT after completing the course. And this is not counting basic programming courses even from kindergarten and school and the increasing flow of IT students at universities. IT is starting to select potential specialists from other specialties. This flywheel spins slowly but surely.

- In investment bank reports, the market for no-code solutions (which are direct substitutes for development) already looks like something like this. And if you look at this list, you'll see that the number of products is increasing. Type “the name of any company low-code/no-code” in the search engine and you will understand that almost everyone — from Russia's Sberbank to the American Apple — is thinking about how to significantly increase productivity in development.

- After all, the imbalance in the labor market can be easily traced to the cost ratio between managers (who have more responsibility and who are responsible for multipliers) and middles (who have significantly less responsibility). The income gap is 3-4 times, and this is no accident.

With 20 million developers in the world, India alone supplies a million developers to the market every year (with an annual increase in this number), so there is some possibility that the developer shortage may not continue in the future. There are also more radical judgments: y Herman Gref, for example, or from a futurist Gerd Leonhard.

Businesses will be able to pay for growing development appetites without going broke

The more expensive it is to develop, the bigger the gap between tech companies and everyone else. The rest are forced to integrate into giant ecosystems. And giant ecosystems have very high labor productivity (due to their volume). This in itself makes companies migrate to foreign ecosystems.

There was a time when IT costs were negligible. But software is becoming more complex and diverse, and the cost of IT infrastructure is a significant factor for startups and one of the main budget items. The more expensive IT is, the more substitutes for investment.

Other types of investments will not compete with IT investments

Why does IT have a lot of money now? Organizations see digitalization as a well-paid investment. The wave of digitalization will pass (all companies will be digitalized to some extent, while others will leave the market), and it will be necessary to support and support the solutions that have been applied. With the increasing cost of IT, won't the question of finding other sources of income arise?

Will the cost of IT become so significant at some stage that it will automatically cut off most of the new projects that are now profitable?

In conclusion

I recommend that developers look towards low-code and at least perform several tasks on any of these platforms to expand their own boundaries.

We must understand the scope of applicability, see a cross-section of current opportunities and learn something new, because that's what we are engineers for to look at new technologies through the eyes of practitioners. You may not find a single LCDP that would solve your problems, but at least researching this trend for the development of engineering knowledge today can be useful.